ChatGPT Health Watches. OpenAI's Fine Print Explains How.

OpenAI Is Misleading People about ChatGPT Health's Privacy Protections

This is the third in a series on using AI for personal health.

Full Disclosure: I built HealthScout, an app to help patients navigate our complex healthcare system.

I was reading OpenAI’s Health Privacy Notice to find out if a court ordered OpenAI to hand over user health data, could they comply? If they could, that would confirm health data is technically accessible to employees.

I never got to that question. Something stopped me on the way there.

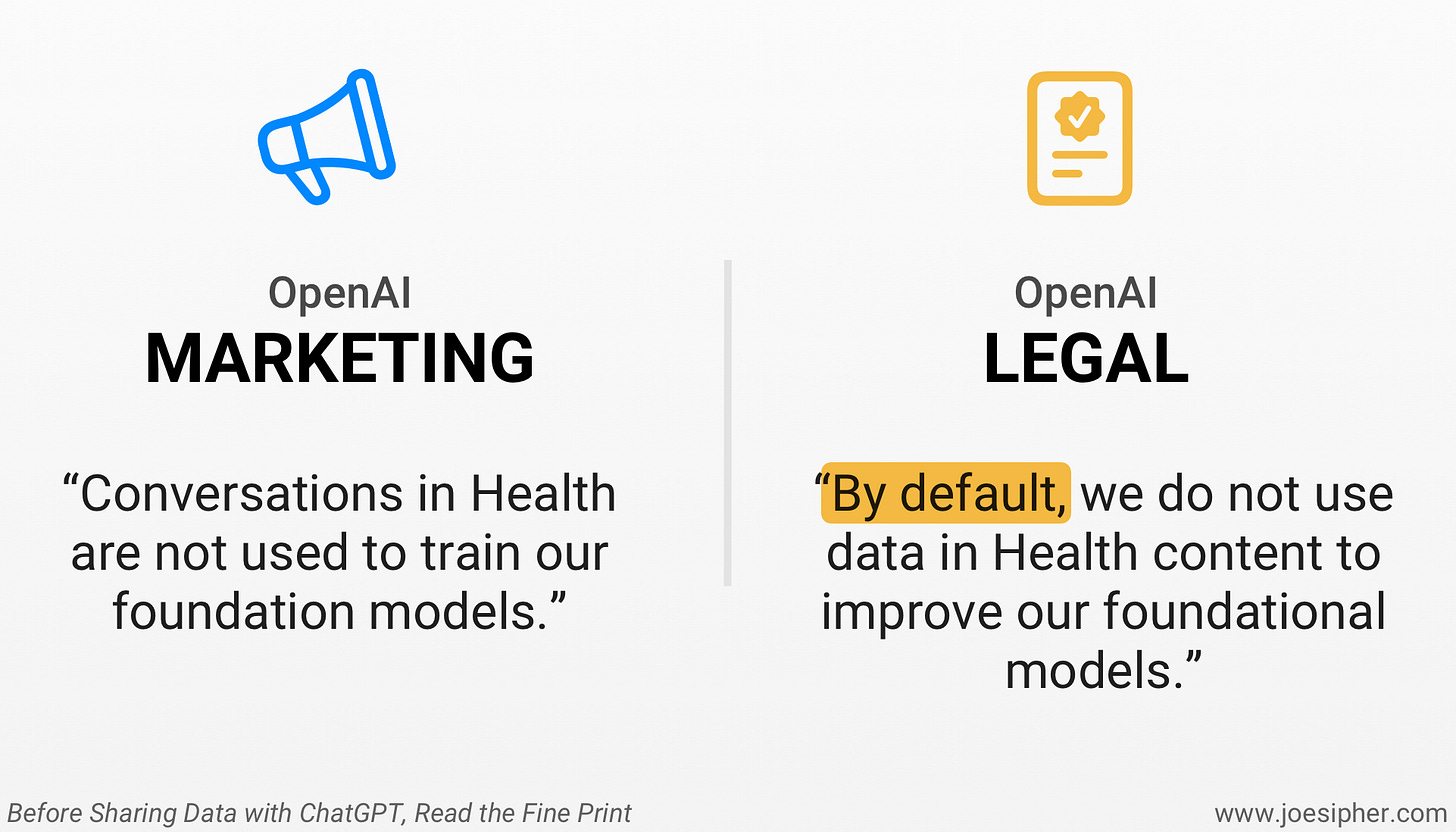

The ChatGPT Health launch page says:

“Conversations in Health are not used to train our foundation models.”

But the actual legal document, the Health Privacy Notice, reads:

“By default, we do not use data in Health content to improve our foundational models.”

Then again, a few paragraphs later: “By default, we do not use content in Health to improve our foundational models.”

“By Default” = There’s a Hole in their Privacy Architecture

Saying “Conversations in Health are not used to train our foundation models” is a commitment. “By default, we do not use content in Health” is a policy, one that can be changed. Policies get updated all the time, often announced with an email that no one will read.

The difference between we don’t do this and we haven’t chosen to do this yet is everything when the data involved is your mental health history, your ED meds, or your colonoscopy results.

The privacy notice also confirms that even under current policy, “a limited number of authorized OpenAI personnel and trusted service providers might access Health content to improve model safety.”

This means people can reach ChatGPT Health data, already.

Therefore, using your data for training is not the only privacy risk. If employees can reach your health data, so can someone who phishes their way in, or an insider who decides their access is worth selling. And of course, the answer to my original question about a court order? Yes, OpenAI would be legally compelled to comply and share your health records.

Good Intentions Don’t Protect Your Data

To be fair, I think OpenAI is genuinely trying to build something useful. ChatGPT Health looks impressive, and the intent seems real.

But intentions don’t protect your data. Architecture does.

The difference is between “we’ve decided not to look at your data” and “we've built a system where we have nothing to hand over.” It’s privacy by policy versus privacy by design.

When we built HealthScout, we made privacy by design an architectural choice from day one, knowing it would constrain the business in some ways. HealthScout stores your health data encrypted using Apple’s highest-level encryption standard, Advanced Data Protection (ADP) Complete, where the keys are locked on your device behind hardware-based Face ID or Touch ID encryption. Apple doesn’t even have them.

In fact, the UK government demanded Apple create a backdoor to this data, and Apple’s response was to pull the feature from the UK entirely rather than comply. That’s the standard HealthScout is built on.

When you ask a question on HealthScout, a portion of your health data is encrypted, transmitted to our AI partners and deleted. Our AI partners are contractually prohibited from using any content from those queries. That’s a legal obligation between two companies that can be enforced in a court of law versus a policy that can flip with a privacy update email to unsuspecting users.

HealthScout also does not collect personally identifying information (PII). No email collection. No account creation. We can’t identify our users even if we wanted to. If HealthScout ever received a court order demanding user health data, we’d have no ability to hand anything over because we don’t have it.

Before you connect your medical records to any AI system, it’s worth asking one question: is this company choosing not to use my data, or have they built a system where they simply don’t have it?

One of those is a promise. The other is a fact.

If you want AI that protects your health records and doesn’t watch what you ask, HealthScout is on the App Store now. No email. No account. We can’t see what you ask.